Remote Code Execution Vulnerability in Google They Are Not Willing To Fix

April 14, 2023This is a story about a security vulnerability in Google that allowed me to run arbitrary code on the computers of 50+ Google employees. Although Google initially considered my finding a serious security incident, later on, it changed its mind and stated that my finding is not, in fact, a vulnerability, but the intended behavior of their software.

Earlier this year, I was doing security research into dependency confusion. Dependency confusion is a software misconfiguration due to which a package that is intended to be pulled from a private package repository is instead downloaded from a public repository, for example, from npmjs.org in the case of NPM. In most scenarios, an attacker finds a name of a private package and uploads a package with the same name to the public repository. The attacker can include any code in the package and in most languages is able to run arbitrary code already at install time, making dependency confusion vulnerabilities particularly dangerous.

From the exploit complexity perspective, dependency confusion issues are easy to exploit. All you have to do is upload a package to the public package repository and wait for the package to be downloaded. This is something anyone can do and does not require any special skills. The hard part is identifying the private packages that are susceptible to dependency confusion. Usually, companies keep their private packages and the projects that utilize these packages private, so you just cannot look at a company’s GitHub and expect to find all the projects vulnerable to dependency confusion together with the names of private dependencies.

Luckily, the names of internal packages are usually not guarded with top-level security measures. For example, for code that is deployed to the front-end, the package names may be included in the code bundle. And while it is not possible to determine which packages would be downloaded due to dependency confusion issues, security researchers (and malicious actors) can upload all potential packages they find – the worst that can happen is that the uploaded package will not be downloaded.

Early this year, I started tinkering with the idea of automatically finding private package names from publicly available source code, hosted on sites like GitHub. This led me to find a large set of private package names which I used to discover a string of dependency confusion issues at various large tech companies.

My method for automatically discovering these package names was simple. I

scanned GitHub repositories for lists of dependencies in common formats and

looked for dependencies that were part of a dependency list, but there was no

matching package in the public package repository. For example, for Python, I

looked at lists matching the output of pip freeze command and for packages

that were not present in PyPi. The search space was constrained to repositories

that were owned by an organization related to major technology companies, such

as Google or Microsoft, and to repositories that were owned by employees of

these companies. I considered a GitHub user an employee if they had set their

company in GitHub to a large tech company, e.g., they had the @google tag on

their profile.

One of the matches of my scanner came from a public repository belonging to a Google employee. My scanner found a reference to a non-existent package (called package X onwards1) in one of the files in the repository. A GitHub search for the package name revealed three more references to the same package in GitHub. All the matches came from employees and ex-employees of Google, so this gave me early confidence that the package was owned by Google.

The reference to package X was in a requirements.txt file, meaning it was a

Python package. This was good news since Python is a language with unarguably

the worst dependency management system. With Python, it is extremely easy to

misconfigure your installation scripts to be prone to dependency confusion. Just

to show how easy it is to shoot yourself in the foot, here is an example shell

command that installs private package kaboom from private registry

https://example.com and is vulnerable to dependency confusion:

pip install kaboom –extra-index-url https://example.com

A reader that works with Python on a daily basis and is up-to-date on security knows that extra-index-url is a no-go for installing private packages. Despite having been known for years now, extra-index-url is still used widely because ordinary users do not consider the security implications when using the parameter. I will not turn this post into a rant about Python and pip, but if you are interested in this topic, there is a discussion with 100+ comments on GitHub regarding extra-index-url: https://github.com/pypa/pip/issues/8606.

After finding package X from the GitHub repository, the next step was to upload a public package with that name to PyPi, the default Python package repository. In the installation script of the public package, I included code that would send an HTTP request with the current hostname and username to a web server I hosted. That allowed me to detect when the package was installed at Google and is the go-to method for confirming dependency confusion vulnerabilities.

After uploading the package, it took about two weeks until I started receiving downloads at a rate of roughly one download per day. The downloads came from computers or virtual desktops of Google employees around the world. They spanned different roles like software engineers and firmware engineers (according to LinkedIn) and different countries, including the United States, Belgium, and China. Interestingly, the downloads came solely from hosts that were associated with specific people and not from automated builds or from Google services. My hypothesis is that package X is an internal tool that is only used locally and not part of any Google product deployments.

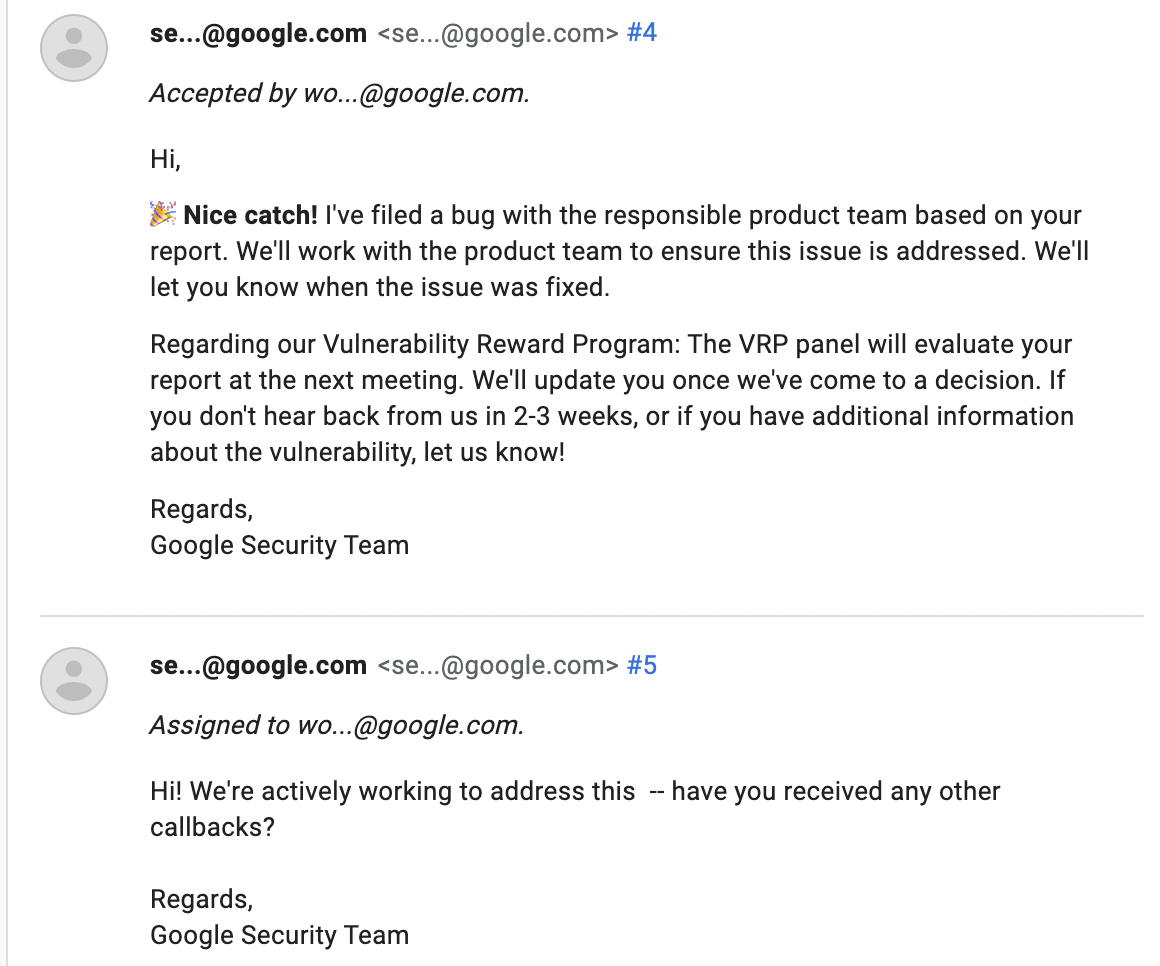

Upon receiving the first real download within Google, I reported the vulnerability to Google’s vulnerability reward program. At first, Google took action quickly and classified the bug as S0, which is the highest severity in their vulnerability reward program. They fixed the issue in about two weeks and awarded a bounty of $5002. During these two weeks, the package was installed to roughly 15 unique hosts.

For the purpose of adding more credibility to the story, here are the details of a few hosts to where my version of package X was installed:

| IP address | Hostname | Username |

|---|---|---|

| 34.78.140.88 | rgouthiere-cloudtop-1.c.googlers.com | rgouthiere |

| 34.66.121.195 | jianglai.c.googlers.com | jianglai |

| 203.208.61.15 | yanghuang2.sha.corp.google.com | yanghuang |

| 34.82.242.240 | bluerust.c.googlers.com | root |

| 34.145.213.122 | abcd.c.googlers.com | pdelong |

| 104.132.153.85 | martina.lon.corp.google.com | martinacocco |

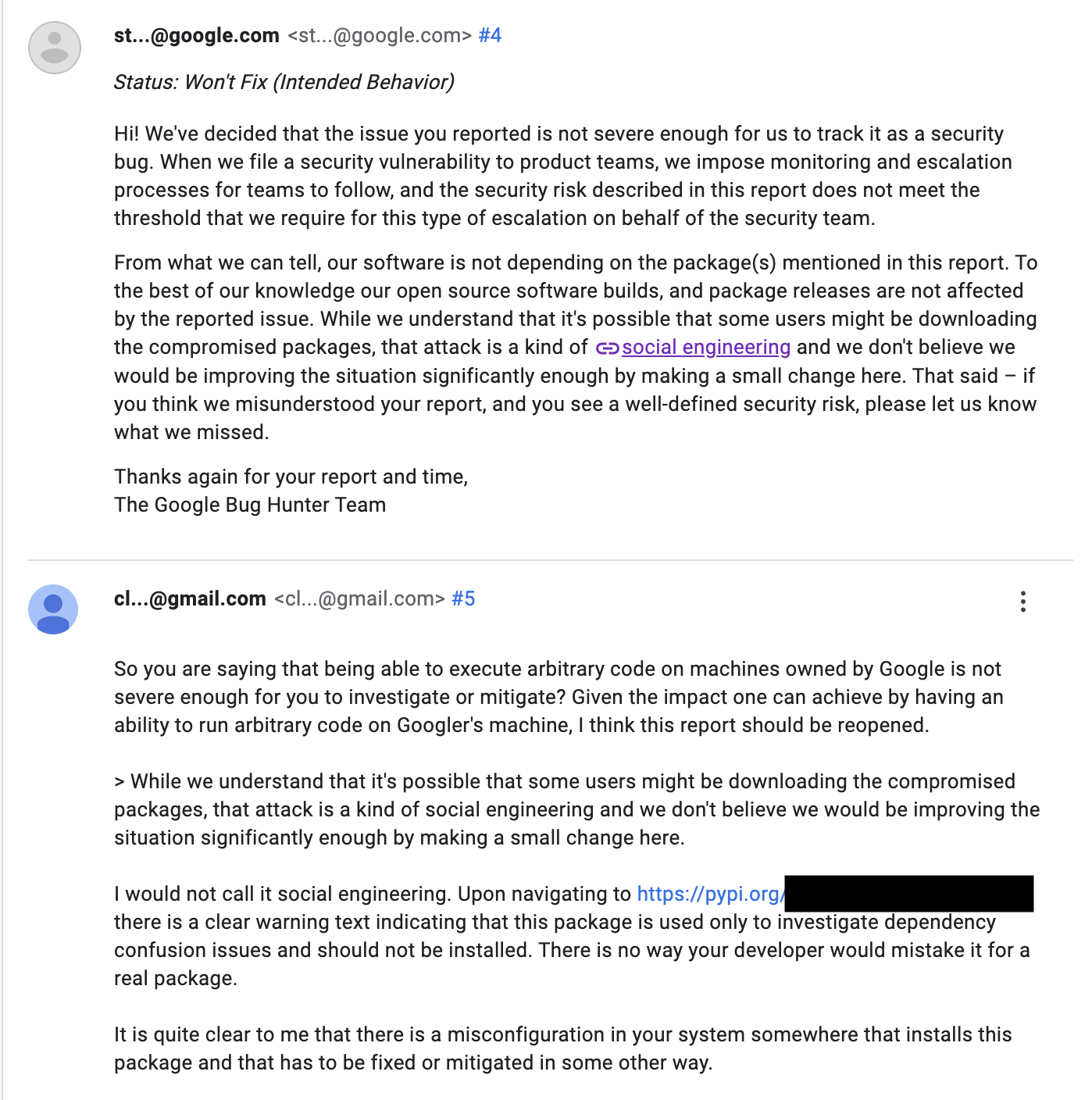

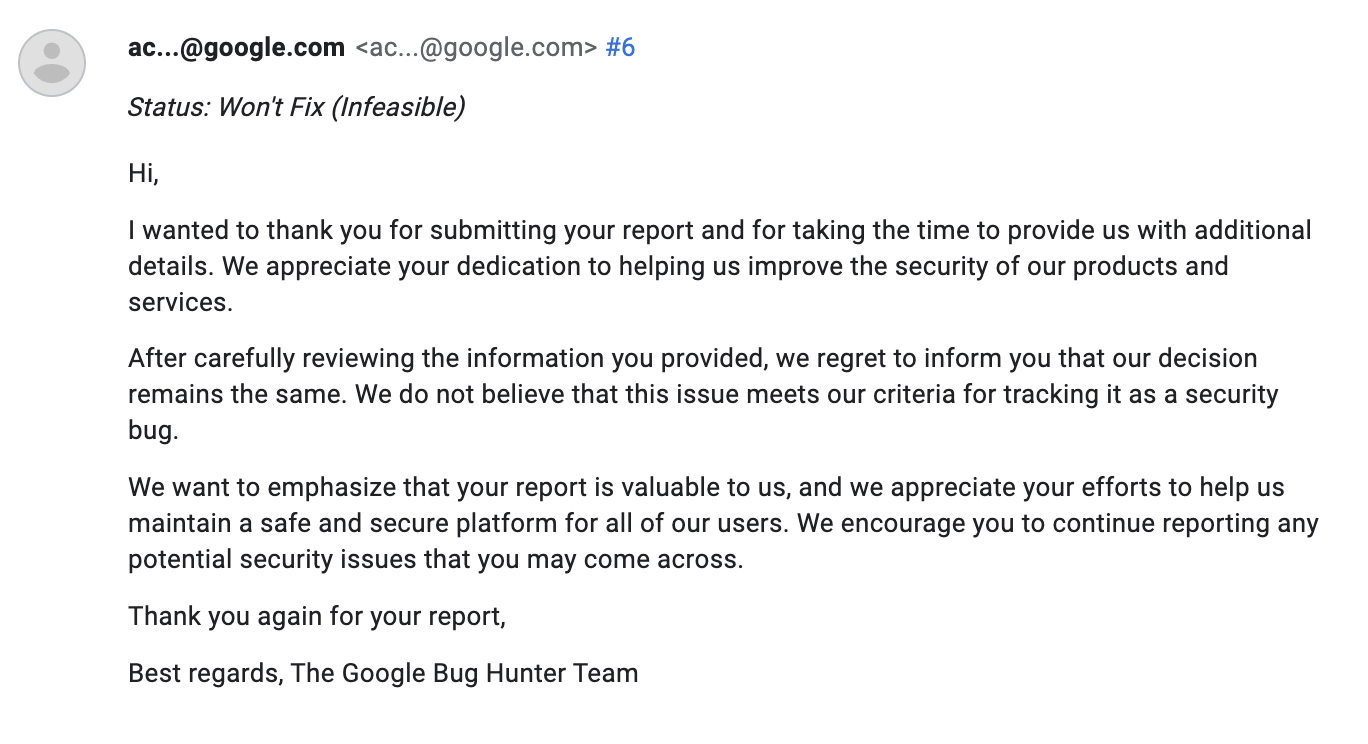

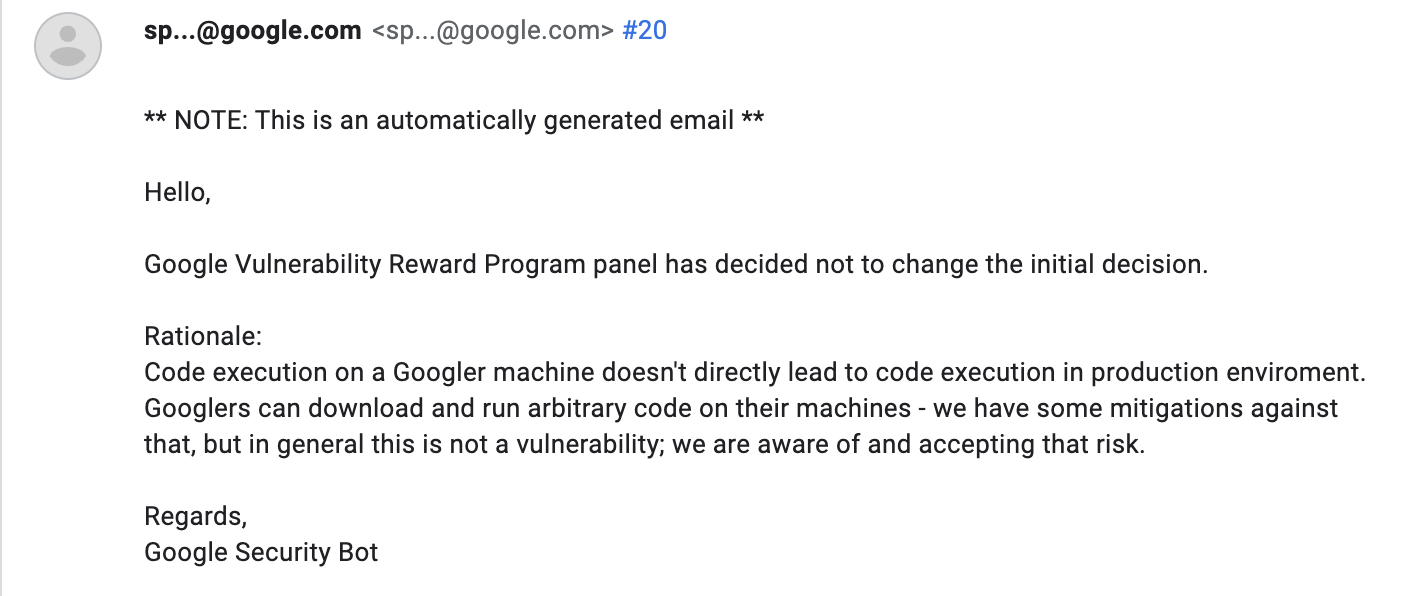

A few weeks after the patch, I started once again receiving downloads from employee devices. I made a new report to Google, saying that the issue has resurfaced. Surprisingly, this time Google closed my report, stating that it is not in fact a security vulnerability and that the software is working as intended. They also said that the vulnerability included social engineering which is out of scope of the vulnerability reward program. This was confusing for me since the bug involves zero social engineering. I made several attempts to highlight the impact of this bug and how it does not involve social engineering. In the end, their decision was the same — everything is working as intended.

Now, there are several options why Google did not think this is a problem and decided not to pursue fixing it:

- They did not understand the problem. I think this option is the most likely one because when I submitted the first report, it was assigned the highest severity possible and promptly fixed. That happened because the case manager understood the problem and its impact. It is possible that the case manager that handled my new report did not simply understand the vulnerability and risks associated.

- There is no actual risk associated with downloading these packages. Since I have not worked at Google, I do not know what are their security practices. It is possible that they run everything in isolated environments where the risk associated is extremely low. Nevertheless, the affected environments should still contain some information because they are not just plain OS installations with no data. There must be something there, like proprietary source code or proprietary inverted binary trees.

- There actually is “social engineering” involved. It is possible that by social engineering they meant that users install the package by themselves from PyPi and somehow mistake it for the internal package. I find that scenario unlikely because it does not explain the few weeks during which the issue was fixed.

- The bug cannot be exploited by malicious user since I claimed the package. This is true since only one person can claim a package on PyPi, but it would definitely be a very odd security practice.

- They simply do not care.

At the time of writing, it has been a week since our last communication and the Python package is still being downloaded by Googlers every day. If I wanted to, I could replace the current version of the package with something malicious, and it would start running on Google’s employees’ computers/virtual desktops.

As far as fixing the problem goes, Google has had a very easy way to fix the specific issue from the start – they could simply have package X removed from PyPi by staff and have the name blacklisted to prevent the same package from being claimed again in the future. This is something other companies to whom I have reported dependency confusion issues have done and guarantees that the same package cannot be used for dependency confusion in the future. Now, this is certainly not a robust fix since there may be other private packages that could be used in place of package X.

In fact, there were five more references to non-existent private packages in the same GitHub repository where I found package X. I will not disclose the names of these packages, but judging by their names, they are likely internal tools and probably installed in the same way as package X. If anyone is interested in penetrating Google (be your intent claiming bug bounties or something else), you can easily find these packages by doing a similar scan as I did. And there are likely even more packages I did not find since I only scanned a small fraction of the data.

Anyway, this was the story of how I hacked into Google. Let’s see if Google takes another look at my report after I publish this post.

By the way, I plan to write another piece that dissects in more detail the methods I used to find these package names from open-source data. In case you are interested, stay tuned.

If you have any comments regarding this article, feel free to reach out to us at [email protected].

I will not disclose the name of package X now since publishing its name will allow anyone (including malicious actors) to easily find other packages from Google susceptible to the same attack. ↩︎

(Updated 15 April 2023) If you are wondering why the bounty awarded was only $500, here is how Google explained it:

↩︎

↩︎

Giraffe Security

Giraffe Security